to quantity. Further, dividing any number by zero returns infinity and dividing any

number by infinity returns zero.

Given the similarities, and since there are different kinds of infinites, I wondered

if there is also a difference in kind of zeros. You would think we couldn't use the

same diagonalization method for zeros that Cantor uses for infinites since infinite

lists can be enumerated by all the real numbers and zero is just one entity, but by

using infinitesimals like 0.001 or 0.0099, we have an infinitely enumerable set of

zeros we can index and apply diagonalization to just the same as Cantor did.

The objection to this would be that infinitesimals are not distinct values and do not

qualify as being distinct in identity, but I have no idea if this is true.

SNERX.COM/MATH Last Updated 2026/4/26 • Read Time 15min • Discord

________________________________________________________________________________________________________________

I never learned math in school, so as an adult I have been trying to teach it to myself. The things I have found on this page are probably wrong, and the things I am not wrong about were likely discovered long ago. I've learned a bit by exploring the OEIS and Wolfram Alpha.

Separate Cardinalities of Zero: Infinity and zero share a lot of properties in common. You can multiply or divide infinity by any number and it is still infinity. You can multiply or divide zero by any number and it is still zero. Neither infinity nor zero are numbers since they both signify the lack of quantity. Both represent limits of calculation since infinity is an upper bounds to quantity and zero is a lower bounds

Infinite Series & Randomness: If you have an infinite series of whole numbers from one to positive infinity, and you randomly select a number from that series, then the number of digits of that selected number will also be infinite. That is, the average length of the series 1-9 is 1, since each number in the series is 1 digit long, but the average length of the series 1-99 is closer to 2, and the average length of the series

1-999 is closer to 3, and so on, trending towards infinity. So the average number of

digits for numbers in a series of integers from one to infinity is infinite. Average

means that roughly half the numbers in the series are longer than the other half, and

roughly half are shorter. But half of infinity is still infinity, thus all the numbers

available to pick must be infinite in length. But no natural number can be infinite in

length, so what gives?

Conversely, there is no real randomness in infinity. If you are to randomly pick a number from one to ten, each number has a one-out-of-ten chance of being picked, but if you are to randomly pick a number from one to infinity, each number has infinitely low odds of being picked. I posit that since these odds asymptotically approach zero, the odds of randomly picking any number out of an infinite series is truly zero, meaning you simply could not pick a number. As further explanation, James Torre pointed me to the impossibility of a measure for the Naturals for why this random selection fails.

Conversely, there is no real randomness in infinity. If you are to randomly pick a number from one to ten, each number has a one-out-of-ten chance of being picked, but if you are to randomly pick a number from one to infinity, each number has infinitely low odds of being picked. I posit that since these odds asymptotically approach zero, the odds of randomly picking any number out of an infinite series is truly zero, meaning you simply could not pick a number. As further explanation, James Torre pointed me to the impossibility of a measure for the Naturals for why this random selection fails.

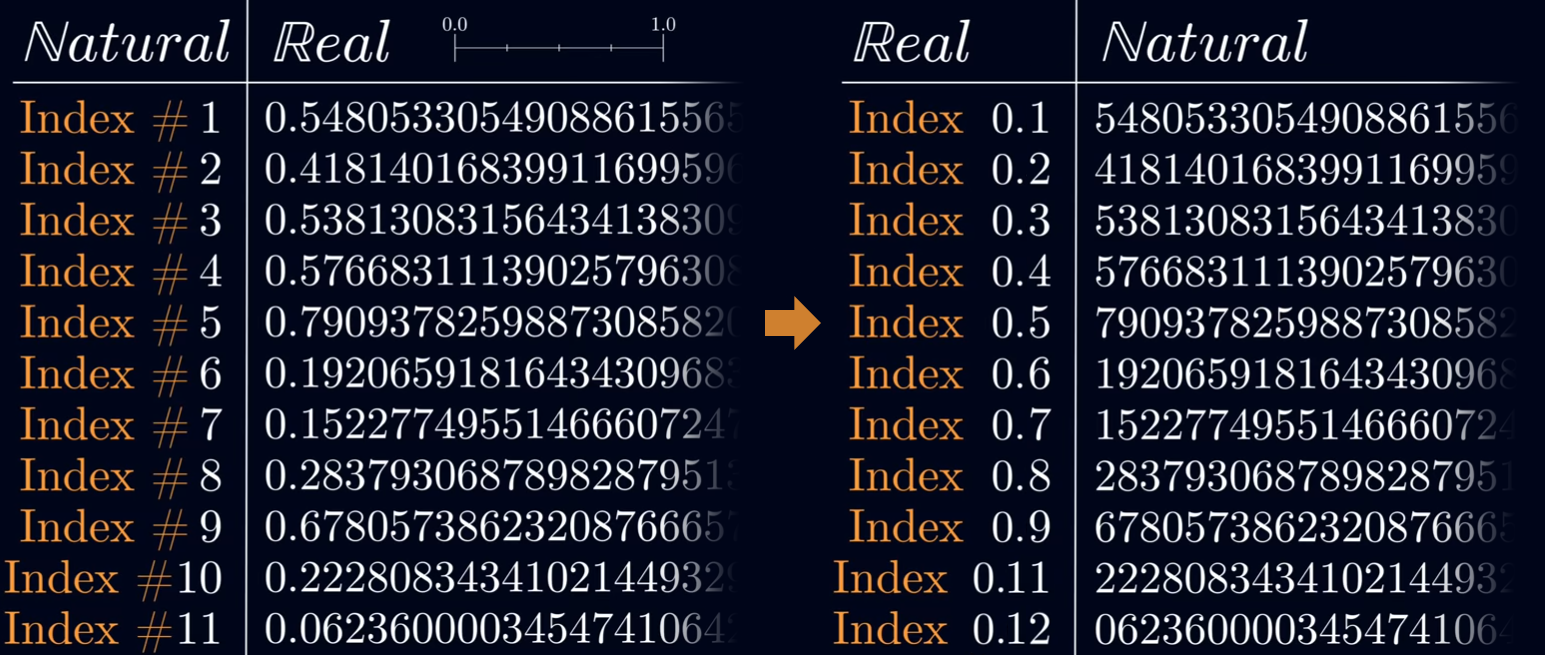

Weirdness With Cantor's Diagonalization: This is a weird way to argue for ℤ and ℝ not having separate cardinalities. There are three quirks with diagonalization. The first occurs when swaping the lists, if you put the natural numbers in a list denominated by the real numbers instead of the other way around (the way Cantor does it). If this were valid, the conclusion would be that the set of natural numbers is

larger (and uncountable) than the set of real numbers (which are then the countable

set), a contradiction since this set contains the natural set. To demonstrate this,

look at the following images modified from a Veritasium video.

Above, you see all I have done is swapped the natural index numbers on the left with the list of real numbers on the right from 0.0 to 1.0. The randomized list of natural numbers is enumerated just the same in the right list as the real numbers are in the left list. All we do now is apply the diagonalization technique Cantor uses on the new list the same as the old list, shown below.

What you see here is that the new natural number we generate from the diagonalization method similarly 'does not appear in the list', much the same as the new real number Cantor generates from the diagonalization method. My version with the swapped lists is directly addressed here and the above issue is considered a non-problem because natural numbers cannot be infinite in length. However, it's not impossible to have infinitely long numbers since we do have some numbers that are infinite in length, like the p-adic numbers. So maybe this is still worth considering.

For the second quirk, I believe his derivation of different cardinalities is due to his mixing of potential infinity with actual infinity, something already known to be improper in physics as far back as their discovery by Aristotle. Whether you use Cantor's original list or my reversed list, both ways require the use of potential infinity for the index numbers and actual infinity for the numbers enumerated to the right of the index. So of course this would appear to be different kinds of infinity, because you have begged the question and baked a pre-supposed conclusion into the formulation of its proof.

The third quirk with Cantor's diagonalization is that it requires the ordering of his real numbers to be random, as reordering the list from smallest to largest real number demonstrates the diagonalized new number in fact already appears on the list. If Cantor's list was in order from smaller to larger infinite decimals, we would see the list ordered as 0.111, then 0.112, 0.113, and so on. If we then applied diagonalization we would get 0.2 from the first real, 0.02 from the second, 0.002 from the third, and so on, resulting in 0.22 as our newly generated real number. But of course, when ordered from smallest to largest decimal, 0.22 would appear later in the list.

I contend that if you don't believe I applied Cantor's diagonalization properly, then you have not been careful to note the kind of infinities I used. In my prior example, I do not mix potential with actual infinity, and thereby the list of 0.11n's we enumerated earlier results in an infinite number of reals that lead with 0.11, meaning we generate an infinite series of 2's. If you think this problem is resolved by baking the idea of uncountability back into real numbers, then simply swap the naturals with the reals as I did in the pictures earlier and the quirk I just described reoccurs.

Above, you see all I have done is swapped the natural index numbers on the left with the list of real numbers on the right from 0.0 to 1.0. The randomized list of natural numbers is enumerated just the same in the right list as the real numbers are in the left list. All we do now is apply the diagonalization technique Cantor uses on the new list the same as the old list, shown below.

What you see here is that the new natural number we generate from the diagonalization method similarly 'does not appear in the list', much the same as the new real number Cantor generates from the diagonalization method. My version with the swapped lists is directly addressed here and the above issue is considered a non-problem because natural numbers cannot be infinite in length. However, it's not impossible to have infinitely long numbers since we do have some numbers that are infinite in length, like the p-adic numbers. So maybe this is still worth considering.

For the second quirk, I believe his derivation of different cardinalities is due to his mixing of potential infinity with actual infinity, something already known to be improper in physics as far back as their discovery by Aristotle. Whether you use Cantor's original list or my reversed list, both ways require the use of potential infinity for the index numbers and actual infinity for the numbers enumerated to the right of the index. So of course this would appear to be different kinds of infinity, because you have begged the question and baked a pre-supposed conclusion into the formulation of its proof.

The third quirk with Cantor's diagonalization is that it requires the ordering of his real numbers to be random, as reordering the list from smallest to largest real number demonstrates the diagonalized new number in fact already appears on the list. If Cantor's list was in order from smaller to larger infinite decimals, we would see the list ordered as 0.111, then 0.112, 0.113, and so on. If we then applied diagonalization we would get 0.2 from the first real, 0.02 from the second, 0.002 from the third, and so on, resulting in 0.22 as our newly generated real number. But of course, when ordered from smallest to largest decimal, 0.22 would appear later in the list.

I contend that if you don't believe I applied Cantor's diagonalization properly, then you have not been careful to note the kind of infinities I used. In my prior example, I do not mix potential with actual infinity, and thereby the list of 0.11n's we enumerated earlier results in an infinite number of reals that lead with 0.11, meaning we generate an infinite series of 2's. If you think this problem is resolved by baking the idea of uncountability back into real numbers, then simply swap the naturals with the reals as I did in the pictures earlier and the quirk I just described reoccurs.

Decimals With Multiple Infinite Sections: 1/3 = 0.33, and 2/3 = 0.66, but what numbers out of a whole give us the other repeating decimals? If we wanted 0.66 out of 1 whole instead of 2, we get the following:

╭──────────────╮ │ 1 ÷ x = 0.66 │ │ 1 = 0.66 • x │ │ x = 1 ÷ 0.66 │ │ x = 1.5 │ ╰──────────────╯As a controversial statement, I contend that this number is actually 1.50015. Why and how? Dividing 1 by 0.666, we get 1.5015, by 0.6666 we get 1.50015, by 0.6666666666 we get 1.50000000015, and so on. By 0.66 what we get is an infinite series of infinites, namely the infinite bar between 15's, that is 1.50015001500, repeating. This is the same as saying 1.5 with an infinite series of zeros following it, and then after infinite zeros there is a 15 followed by another infinite series of zeros, and so on. Another way of saying this is that as the divisor grows in decimal length, so too do the number of zeros between the numbers of the decimal of the quotient. Therefore with an infinitely repeating decimal divisor you get infinitely repeating zeros followed by a finite series of numbers, the set of which itself then infinitely repeats, in the quotient.

I've had people argue with me that, "This is not how fractions work," and if we were using whole-number fractions, they would be right, but I'm not concerned with whole-number fractions; the property I describe deals with numbers in decimal format. The closest thing to this I have been able to find online are Shanks' numbers, but those are distinct in scope and application. I have written out some of the other numbers below so you can see their weird properties:

╭──────────────────────────╮ │ 1 ÷ x = 0.11 │ │ 1 = 0.11 • x │ │ x = 1 ÷ 0.11 │ │ x = 9.00900900 repeating │ ╰──────────────────────────╯This means 9.009 is the bar number attained from 0.11. To re-iterate why this happens we can follow the finite series, which results in:

╭────────────────────────────╮ │ 1 ÷ 0.1 = 10 │ │ 1 ÷ 0.11 = 9.0909 │ │ 1 ÷ 0.111 = 9.009009 │ │ 1 ÷ 0.1111 = 9.00090009 │ │ 1 ÷ 0.11111 = 9.0000900009 │ ╰────────────────────────────╯You keep adding zeros per n decimals of 0.11 from here, ultimately giving us 9.009. A list of these follows.

For 1 ÷ 0.11 we get 9.009

For 1 ÷ 0.22 we get 4.50045

For 1 ÷ 0.33 we get 3.003

For 1 ÷ 0.44 we get 2.2500225

For 1 ÷ 0.55 we get 1.80018

For 1 ÷ 0.66 we get 1.50015

For 1 ÷ 0.77 we get 1.2857142857142857142870012857 (see below)

For 1 ÷ 0.88 we get 1.125001125

For 1 ÷ 0.99 we get 1.001

Notice the strangness of 0.77's bar number and how no other number has the same level of noise so far. The way it resolves is somewhat dynamic too:

╭────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ │ 1 ÷ 0.7 = 1.42857142857 │ │ 1 ÷ 0.77 = 1.29870129870 │ │ 1 ÷ 0.777 = 1.28700128700 │ │ 1 ÷ 0.7777 = 1.28584287000128584287000 │ │ 1 ÷ 0.77777 = 1.28572714298571557144142870000128572714298571557144142870000 │ │ 1 ÷ 0.777777 = 1.28571557142985714414285842857271428700000128571557142985714414285842857271428700000 │ │ 1 ÷ 0.7777777 = 1.28571441428572714285842857155714287000000128571441428572714285842857155714287000000 │ │ 1 ÷ 0.77777777 = 1.28571429857142870000000128571429857142870000000 │ │ 1 ÷ 0.777777777 = 1.28571428700000000128571428700000000 │ │ 1 ÷ 0.7777777777 = 1.28571428584285714287000000000128571428584285714287000000000 │ │ 1 ÷ 0.77777777777 = 1.28571428572714285714298571428571557142857144142857142870000000000 │ │ 1 ÷ 0.777777777777 = 1.28571428571557142857142985714285714414285714285842857142857271428571428700000000000 │ ╰────────────────────────────────────────────────────────────────────────────────────────────────────────────╯This is strange since it resolves to an infinite period in the bar number and further there are two infinite sequences within the general infinite sequence (demarcated by the double-bars). I do not know why the bar number for 0.77 does not have a finite period length, even though all the others do.

1 ÷ 1.22 is neat, resolving as:

╭──────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮ │ 1 ÷ 1.2 = 0.833 │ │ 1 ÷ 1.22 = 0.819672131147540983606557377049180327868852459016393442622950 │ │ 1 ÷ 1.222 = 0.818330605564648117839607201309328968903436988543371522094926350245499181669394435351882160392... │ │ 1 ÷ 1.2222 = 0.818196694485354279168712158402880052364588447062673866797578137784323351333660612011127475045000 │ │ 1 ÷ 1.22222 = 0.81... (the period length is 29,216 digits long) │ ╰──────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯This one is weird to me because 1 ÷ 1.22 is just 0.81, but the finite sequence leading up to it is really dynamic.

Most of this will probably never be looked at by anyone or or be useful for anything, but since there are different kinds of infinities in math, the countable infinity in the number 0.001 can be overcome when divided by a number that is an uncountable infinity. The infinite part of 0.001 can be skipped over by an uncountable infinity, leaving the 1 at the end as a non-arbitrary part of the divisor. Maybe what I've been calling the 'bar' numbers above have a possible application in cleaning up infinites. As a big stretch, fractional divisors in equations for physical systems that result in very fuzzy statistical outcomes could probably be cleaner by exploiting this property since infinite values appear often in those systems.

Goldbach's Conjecture: If not already familiar with the conjecture, read this. All even numbers are predicated off of 2, and 2 is a prime, so all reduction of numbers predicated by 2 can be reduced to primes of the predication value, which in this case is 2 (so 2 primes). The generalization then is such that any member of a factor-tree based on some number X will inherit properties of X and will be reducible to an

X-unit-count that share at least one of those given properties.

The way this looks for the existing conjecture: Every number factorable by 2, past 2, can be expressed by 2 units of some property of 2 (namely primehood).

The way this looks for new conjectures:

1 — Every number factorable by 1 (all whole numbers), past 1, can be expressed by 1 unit of some property of 1 (namely empty product or unity/identity).

3 — Every number factorable by 3, past 3, can be expressed by 3 units of some property of 3 (namely merseene primes or fermat primes); effectively the weak conjecture.

π — Every number factorable by π, past π, can be expressed by π units of some property of π (namely irrational numbers or transcendental numbers).

2 — Every even integer greater than 2 can be expressed as the DIFFERENCE of 2 primes.

The last example is an augmented version of Goldbach's Conjecture showing that unit relations are arbitrary so long as the relations are of non-arbitrary units themselves. The point of this is to show that there is a generalized form of the conjecture that lets us posit any number of equally plausible relations/patterns, and if Goldbach is right about his conjecture, then so too must all the other conjectures of the same form be correct.

The way this looks for the existing conjecture: Every number factorable by 2, past 2, can be expressed by 2 units of some property of 2 (namely primehood).

The way this looks for new conjectures:

1 — Every number factorable by 1 (all whole numbers), past 1, can be expressed by 1 unit of some property of 1 (namely empty product or unity/identity).

3 — Every number factorable by 3, past 3, can be expressed by 3 units of some property of 3 (namely merseene primes or fermat primes); effectively the weak conjecture.

π — Every number factorable by π, past π, can be expressed by π units of some property of π (namely irrational numbers or transcendental numbers).

2 — Every even integer greater than 2 can be expressed as the DIFFERENCE of 2 primes.

The last example is an augmented version of Goldbach's Conjecture showing that unit relations are arbitrary so long as the relations are of non-arbitrary units themselves. The point of this is to show that there is a generalized form of the conjecture that lets us posit any number of equally plausible relations/patterns, and if Goldbach is right about his conjecture, then so too must all the other conjectures of the same form be correct.